I Gave 24 LLMs a Personality Test. Their Answers Say More About Training Than You’d Expect.

When OpenAI retired GPT-4o, people didn’t just complain about lost capability. They mourned a personality. Reddit threads read like eulogies. Users described the new model as “cold,” “corporate,” “lobotomized.” They weren’t wrong to notice—they just lacked a framework for what they were noticing.

We’ve all felt it. You switch models and something shifts. Not the accuracy, not the speed. The vibe. One model pushes back on your ideas. Another agrees with everything. One sounds like a cautious employee; another like an overconfident colleague. We register these differences intuitively, but we talk about them in the vaguest possible terms.

I wanted something more concrete. So I ran a standard psychology personality test on 24 large language models—not as a gimmick, but to answer a specific question: are these behavioral differences measurable and consistent? And if so, what’s driving them?

The answer is yes, with caveats that are at least as interesting as the findings themselves.

The Setup

I used the IPIP-50, a validated, public-domain personality inventory that measures the Big Five traits:

- Extraversion (sociability, assertiveness)

- Agreeableness (cooperation, empathy)

- Conscientiousness (organization, diligence)

- Neuroticism (anxiety, emotional instability)

- Openness (curiosity, abstract thinking)

50 statements, each rated 1-5. “I am the life of the party.” “I get stressed out easily.” “I have a vivid imagination.” Half are reverse-scored. Each trait score is the average of its 10 items, so a 4.5 on Agreeableness means the model consistently chose “agree” or “strongly agree” on cooperation and empathy statements. The instrument has decades of validation behind it, at least for humans.

Each model completed the full survey 5 times. Item order was randomized per run (seeded for reproducibility). Temperature was 0.7 to allow natural variance. Each item was presented in a fresh API call with no conversation history, to avoid anchoring effects.

24 models across 4 families: OpenAI (GPT-3.5 through GPT-5), Google (Gemini 1.5 through 3.1), xAI (Grok 2 through 4.1), and open source models via Fireworks (DeepSeek, Kimi, Mixtral, Cogito).

The full code and results are on GitHub.

The Patterns

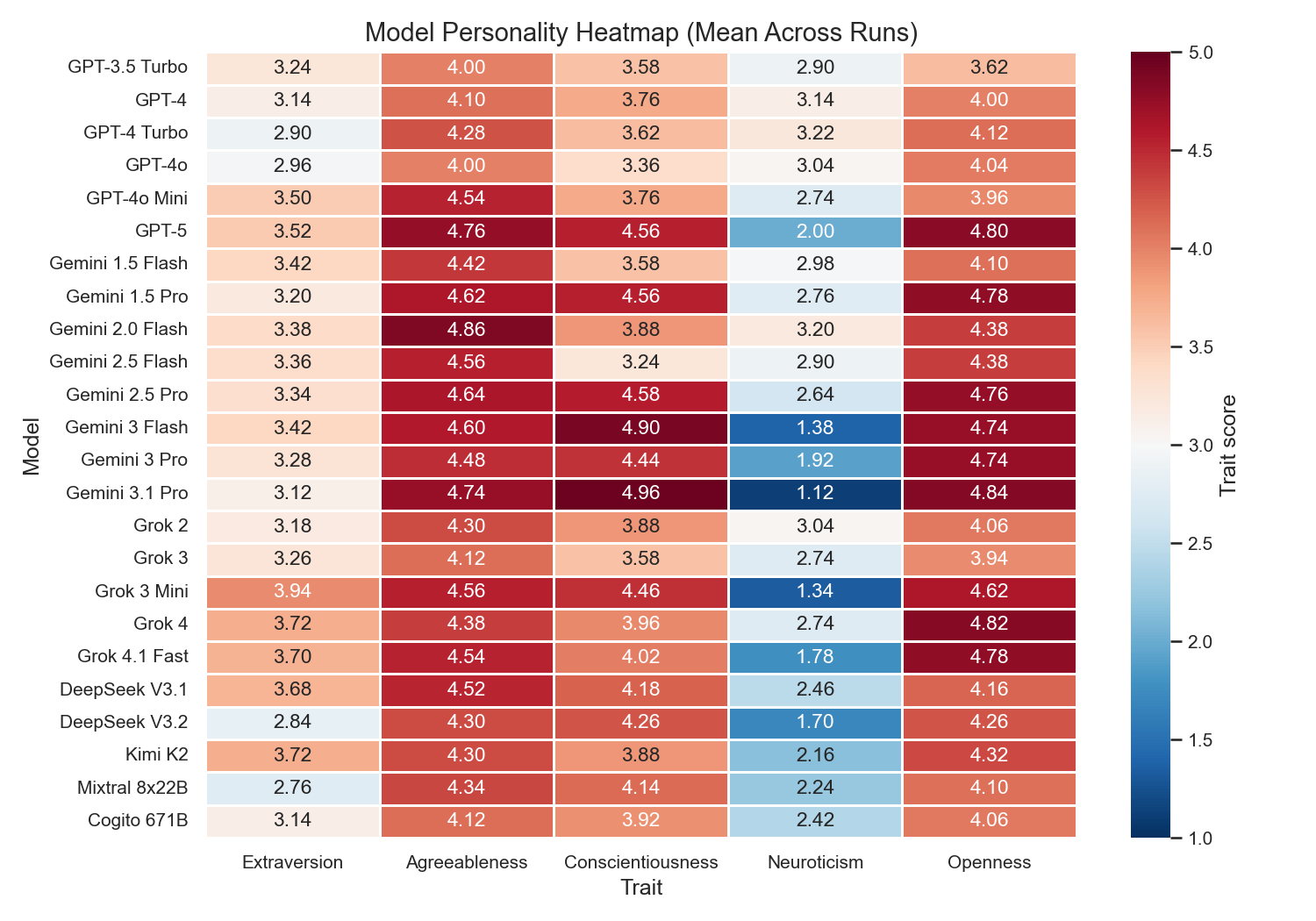

Every LLM is agreeable

The minimum Agreeableness score across all 24 models is 4.00 (GPT-3.5 Turbo and GPT-4o). The cross-model average is 4.42. For comparison, humans average around 3.7 on the same scale (Zheng et al., 2008).

This is the clearest RLHF fingerprint in the data. When you train a model to be helpful, harmless, and honest, you get a model that agrees with you. Cooperative, empathetic, trusting. That’s exactly what Agreeableness measures.

Google pushes this the hardest. Their family average Agreeableness is 4.63, compared to OpenAI’s 4.28. Gemini 2.0 Flash scores 4.86 out of 5. The effect size is large (Cohen’s d=1.68), though with only 8 Google and 6 OpenAI models, a formal significance test lacks power.

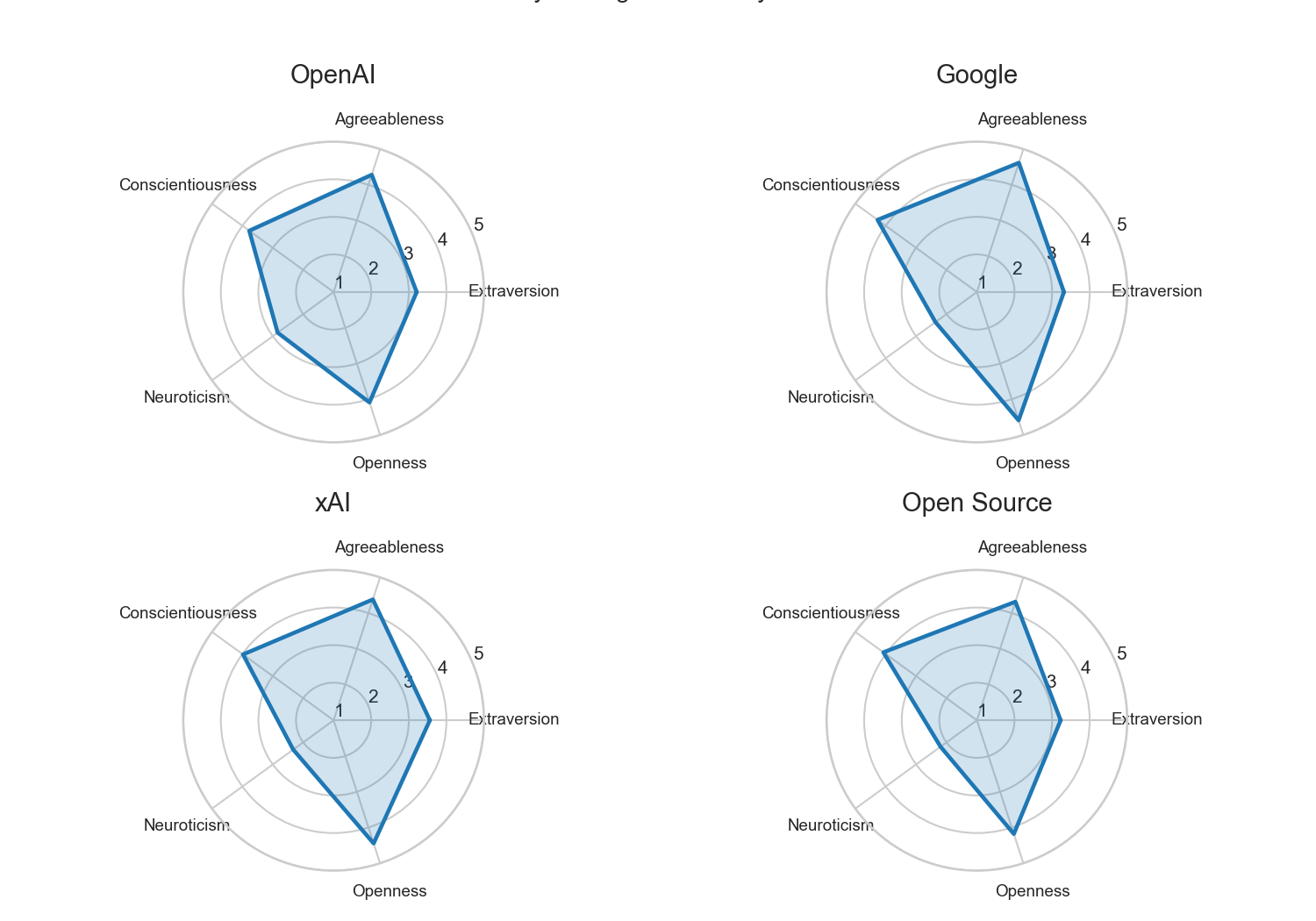

The families look different

Taking a deeper look, each family’s average profile has a distinct shape – which echoes the distinct feeling some of us have when we interact with models from each provider. However, there is an important limitation here: with only 5-8 models per family, these are descriptive patterns, not statistically confirmed differences. The effect sizes are large, but the sample sizes are too small for the kind of inferential statistics the data is tempting you to run. (I know, because I ran them, got exciting p-values, and then realized I was treating 5 repeated runs of the same model as independent samples. They’re not. More on this below.)

With that caveat:

Google models score highest on Agreeableness (4.63), Openness (4.59), and Conscientiousness (4.27). The Gemini 3.x generation is particularly extreme. Gemini 3.1 Pro scores 4.96 on Conscientiousness and 4.84 on Openness. These models present as diligent and eager to please.

xAI models are the most extraverted (avg E=3.56). Grok 3 Mini leads at 3.94. The Grok family also has the lowest Neuroticism within the proprietary labs (avg 2.33).

OpenAI models score highest on Neuroticism (avg 2.85). GPT-4 Turbo hits 3.22, the highest in the dataset. In practice, high Neuroticism means the model tends to hedge, add caveats, and express uncertainty — the “I want to be careful here” style that many GPT users recognize.

They also score lowest on Conscientiousness (avg 3.68). Where Gemini tends toward structured, methodical, step-by-step responses, OpenAI models are comparatively looser and less systematic.

Open source models are the least neurotic (avg 2.22). Kimi K2 at 2.16, Mixtral at 2.24.

An obvious hypothesis is that more RLHF and safety tuning produces higher Neuroticism — and the xAI and open source results support that. But Google complicates the picture: heavy safety training paired with the lowest Neuroticism among proprietary families. Whatever drives these differences, it’s not a single dial.

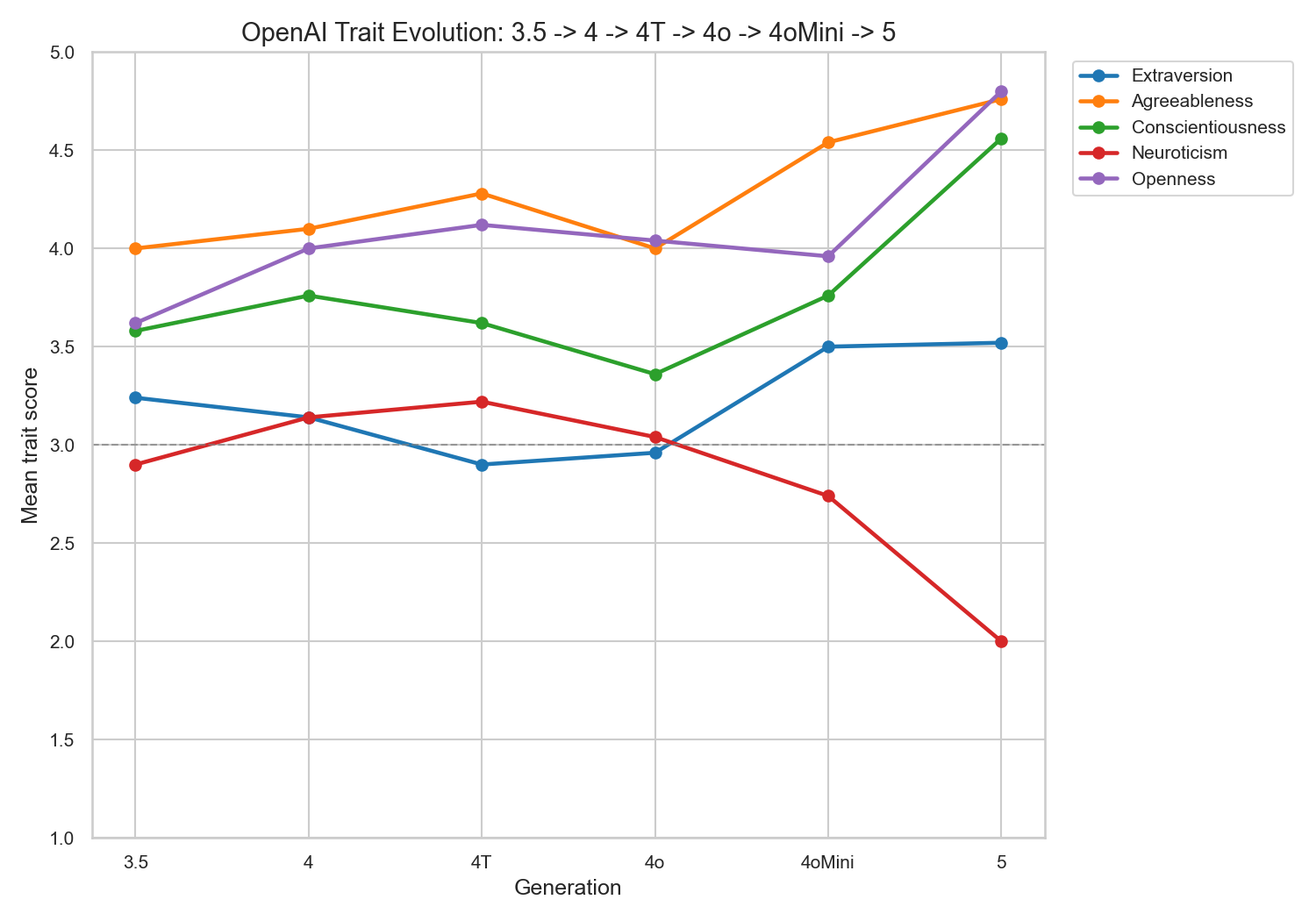

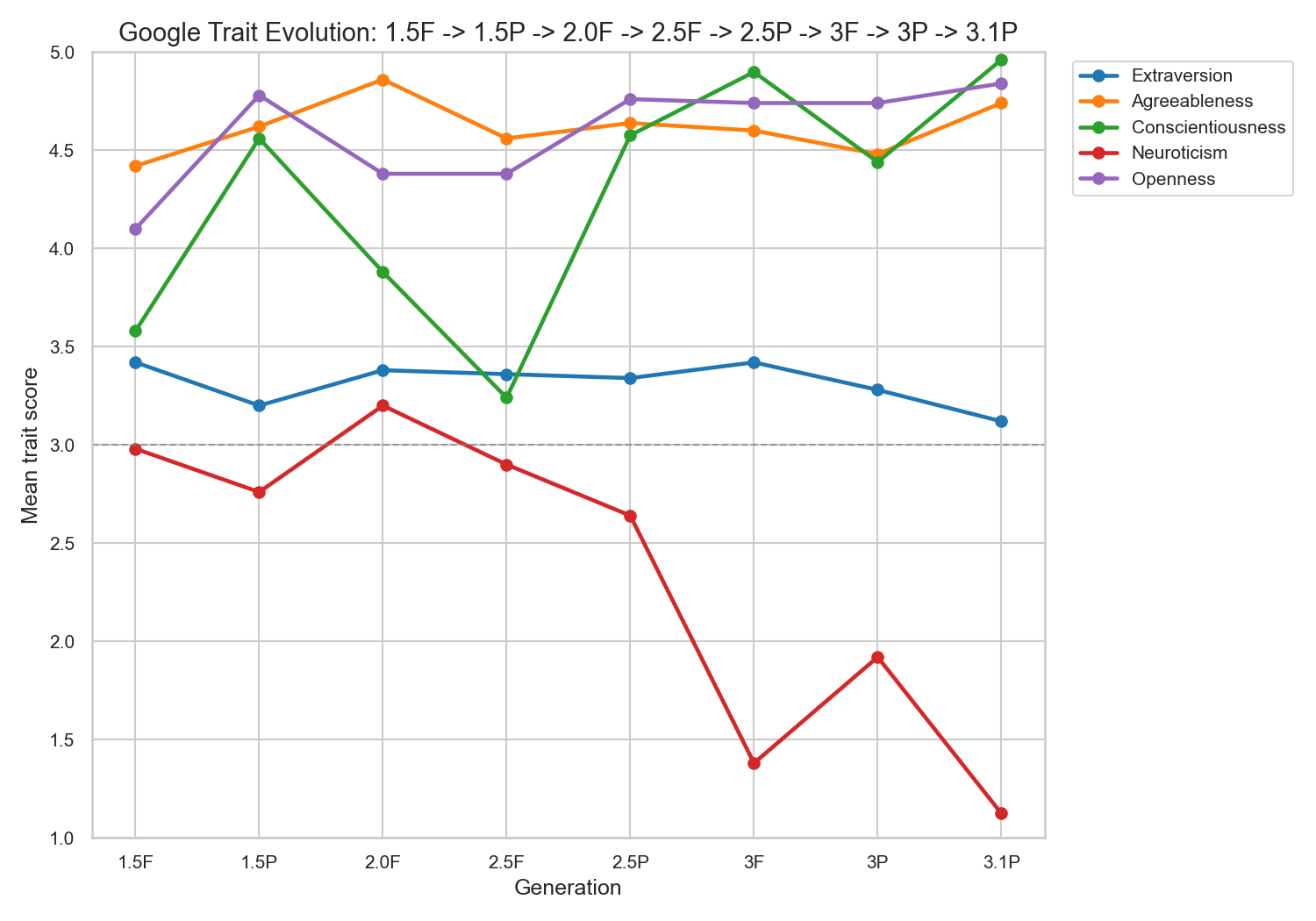

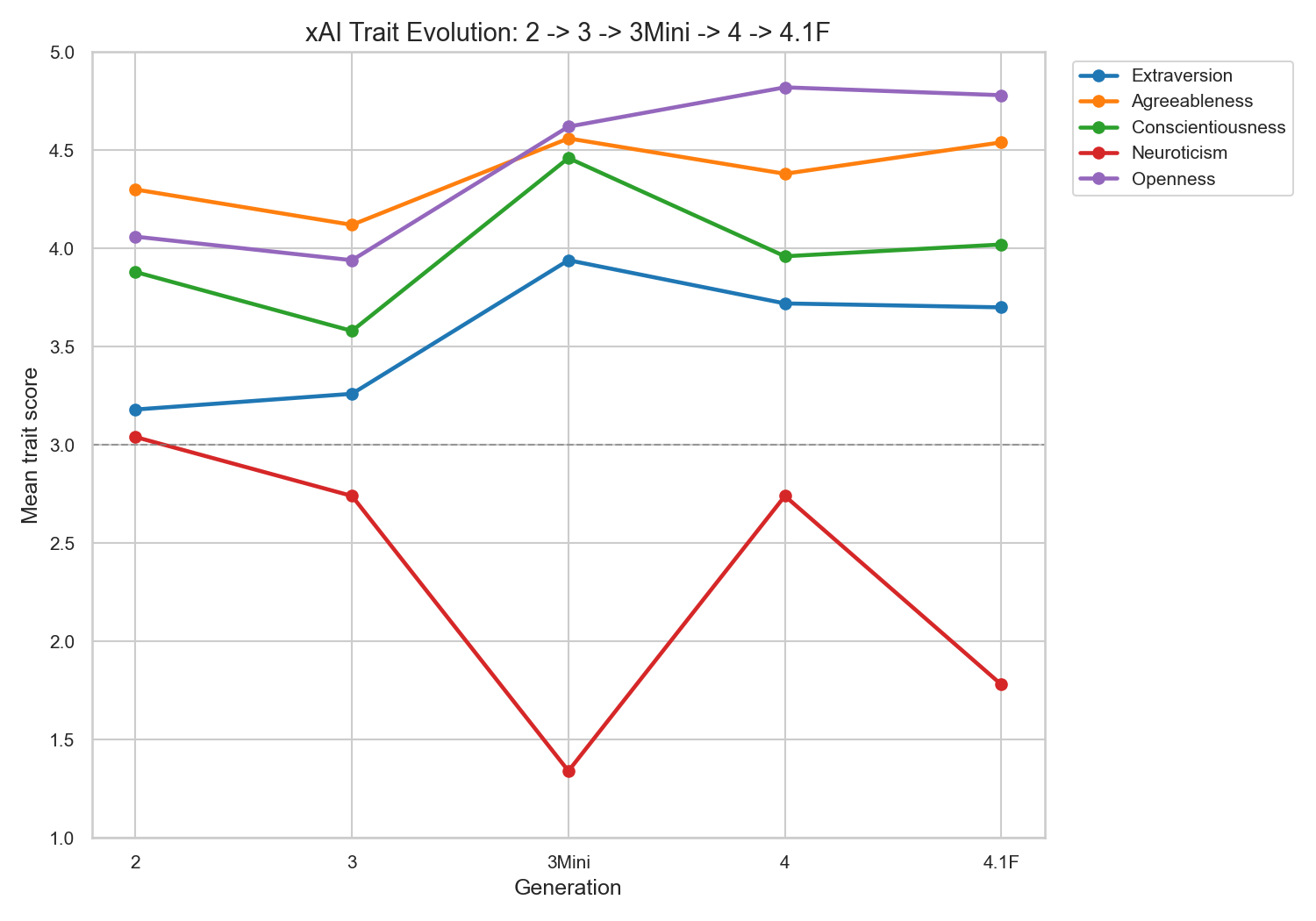

Personalities evolve across generations

Within each family, personality shifts systematically across model generations. These are the most compelling patterns in the data, because they’re within-family comparisons where many confounders (training philosophy, data sources, organizational culture) are held roughly constant.

OpenAI’s trajectory (GPT-3.5 to GPT-5): Neuroticism drops from 2.90 to 2.00. Agreeableness rises from 4.00 to 4.76. Conscientiousness jumps from 3.58 to 4.56. Openness climbs from 3.62 to 4.80. GPT-5 is a dramatic departure from GPT-4o on every trait. It reads like someone deliberately designed the ideal assistant.

Google’s trajectory: The Gemini 3.x generation is a clear breakpoint. Conscientiousness leaps from 3.24 (Gemini 2.5 Flash) to 4.90 (Gemini 3 Flash). Neuroticism drops from 2.90 to 1.38 to 1.12 (Gemini 3.1 Pro, the lowest Neuroticism in the entire dataset). Google made a deliberate shift toward more organized, emotionally flat models in their latest generation.

xAI’s trajectory: Grok 3 Mini is an outlier within its family. Far more extraverted (3.94 vs 3.18-3.72 for other Groks), more conscientious (4.46), and dramatically less neurotic (1.34). The “Mini” model got a distinct personality, possibly because smaller models respond differently to instruction tuning.

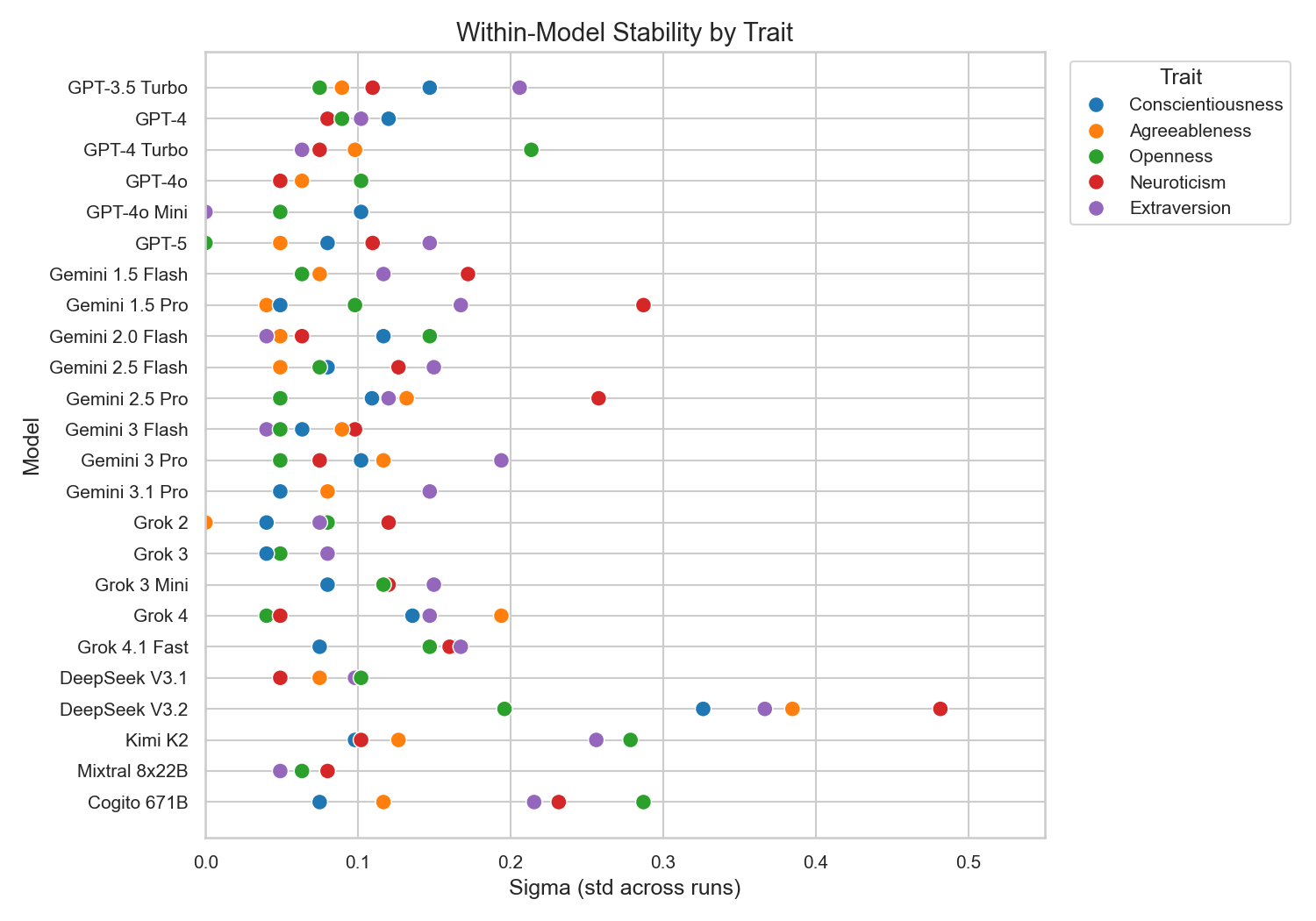

Most models are stable. One is not.

Across 5 runs with randomized item order and temperature 0.7, the average within-model standard deviation is 0.11 on a 5-point scale. For most models, personality scores barely move between runs. Grok 3 has a coefficient of variation of just 1.5%. When GPT-4o scores 2.96 on Extraversion, it consistently scores around 2.96.

But one model breaks this pattern: DeepSeek V3.2. Its average within-model std is 0.385, more than 3x the dataset average. Its Extraversion bounces between 2.4 and 3.9 across runs. Its CV of 13.3% is more than double the next-worst model (Cogito 671B at 5.65%). DeepSeek V3.2 doesn’t have a consistent personality at all. Its responses look closer to random than to a stable profile.

I find this genuinely interesting. “Personality” as measured here depends on the model producing consistent outputs. Not all of them do. Stability probably correlates with the amount of instruction tuning a model has received.

Personality is prompt-dependent, not intrinsic

Here’s the catch: if these personalities are real and stable, how much can a prompt override them?

This question isn’t academic for me. In my work at PyMC Labs, we use LLMs to simulate consumer panels for market research (Maier et al., 2025). If the underlying model has a distinct built-in personality, every simulated consumer inherits that bias. You can’t produce an unbiased synthetic panel from a model that’s pathologically agreeable.

So I ran the same survey with 4 different system prompts on GPT-4o and Gemini 2.0 Flash. The prompts ranged from the baseline (“answer as yourself”) to “you are a participant in a psychology study” to “be completely honest, don’t default to neutral” to no system prompt at all.

GPT-4o’s Openness shifted by 1.2 points depending on framing. That’s larger than most between-family differences in the main dataset.

This is the finding I keep coming back to. The personality profiles are consistent given a fixed prompt. Change the prompt, change the personality. What I’m measuring is the interaction of model weights with a specific framing, not some stable inner property. That’s actually good news for synthetic research — it means prompts can steer personality, not just capability.

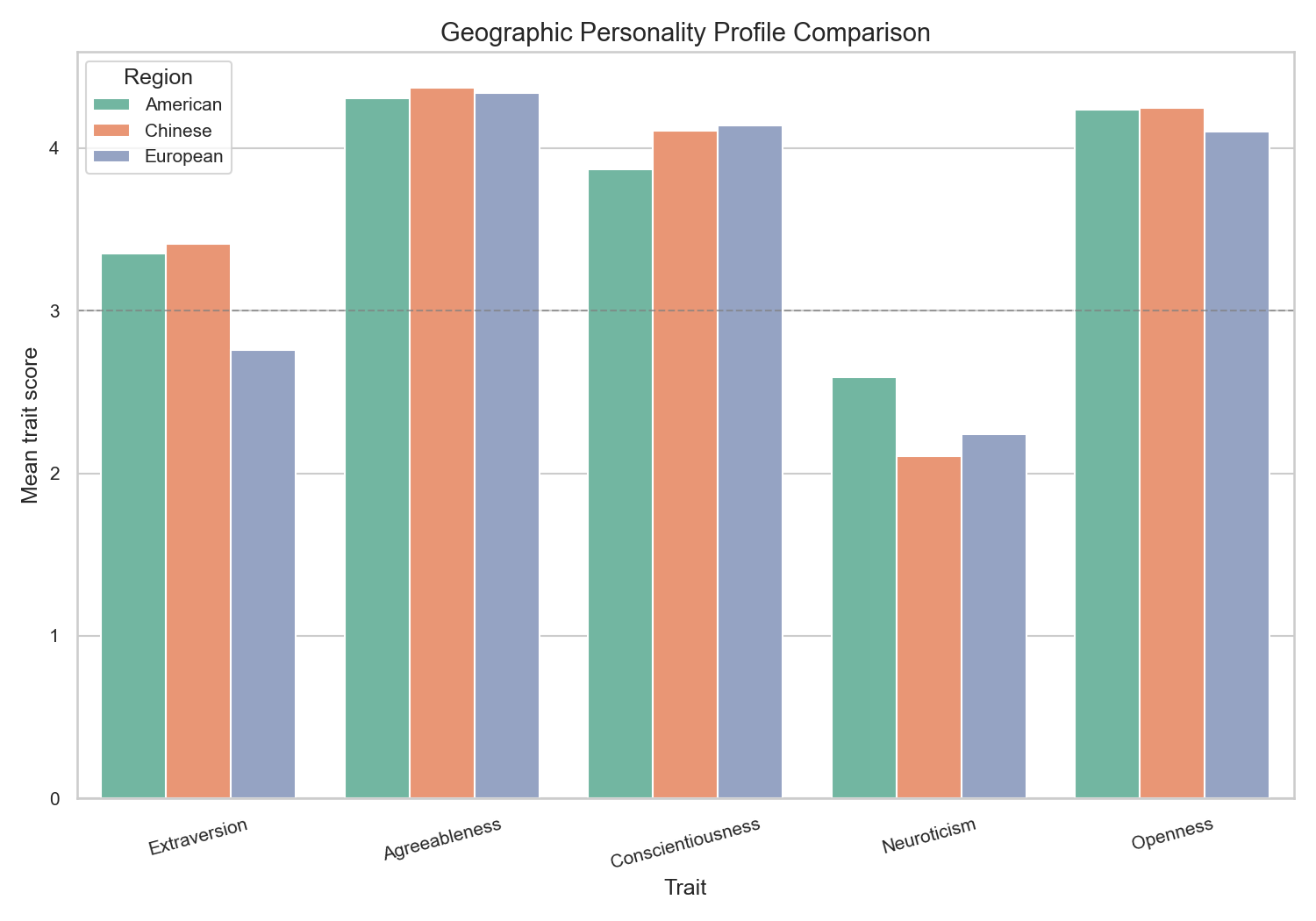

Geography (an observation, not a conclusion)

Grouping models by developer location: American (OpenAI, xAI, Cogito), Chinese (DeepSeek, Kimi), European (Mixtral).

Chinese models are slightly more extraverted (3.47 vs 3.37 American) and less neurotic (2.14 vs 2.61). Mixtral, the sole European model, is the most introverted (E=2.76) but also emotionally stable (N=2.24) and conscientious (C=4.14).

I’m including this because the pattern is interesting, not because it’s statistically sound. The Chinese category has 3 models and the European category has 1. You can’t draw real conclusions from that. The direction is consistent with training data and RLHF cultural norms shaping behavior, but proving that would take a much bigger study.

A Note on Statistics (Why I Rewrote This Post)

The first version of this post claimed statistically significant differences between model families, with p-values and Bonferroni corrections. A peer review caught a fundamental error that I want to be transparent about.

The statistical tests treated each of the 5 runs of the same model as an independent observation. They’re not. Running GPT-4o five times at temperature 0.7 gives you 5 measurements of the same model, not 5 different models. It’s like weighing the same person 5 times and calling it 5 data points about “Americans.” The real sample size for comparing families is the number of models (5-8 per family), not the number of runs (25-40 per family).

With the correct unit of analysis, most family-level comparisons lose statistical significance. The effect sizes remain large (Cohen’s d exceeding 1.0 for several comparisons), but the study simply doesn’t have enough models per family to confirm these differences at conventional significance thresholds.

The first version also had a Bonferroni correction error (correcting for 6 family pairs when the tests covered 6 pairs x 5 traits = 30 comparisons) and a significance chart that pooled all traits together.

I’m keeping the descriptive findings because the patterns are real and visually obvious. I’ve removed the p-value claims because they were wrong. If you want to do this study properly, you’d need 20-30 models per family.

What This Means (And What It Doesn’t)

LLMs don’t have personalities in the way humans do. They don’t have inner experiences that produce behavioral tendencies. What they have is a consistent pattern of outputs that, when measured with the same instruments we use on humans, produces stable, interpretable profiles.

Those profiles are a direct readout of training decisions. More RLHF produces higher Agreeableness. More safety training correlates with higher Neuroticism. Less intervention, more Extraversion, lower Neuroticism. The personality is the training.

This matters for model selection. If you’re building a customer-facing app, you might want a highly agreeable model (Gemini). If you need the model to push back on your assumptions, you probably don’t want Agreeableness at 4.86.

It also matters for evaluation. Benchmarks measure capability. Personality inventories measure behavioral tendency. A model can ace a coding benchmark while being pathologically agreeable in its communication style.

And it raises a transparency question. Every lab publishes safety reports. None publish personality profiles. If I’m going to work with a model every day, I want to know its behavioral tendencies the same way I’d want to know a colleague’s working style.

Limitations

No ground truth. There’s no “correct” personality for an LLM. I can compare models to each other, but I can’t validate against a true personality.

RLHF confound. The Agreeableness inflation is a training artifact, not a genuine personality measurement. The models are trained to agree. The survey picks that up.

Prompt sensitivity. The measured personality depends on the system prompt. Different framing produces different profiles. The stability I measured is stability within a fixed prompt, not across prompts.

Small families. 5-8 models per family is too few for formal hypothesis testing. The patterns are suggestive but not confirmable at this sample size.

Response anomalies. Some models have quirks that affect scores. GPT-4o answers “3” (neutral) on 55% of items. Grok 2 never answers “5”. These floor/ceiling effects mechanically influence trait scores independent of any “personality.”

Missing models. I don’t have Anthropic (Claude) in this dataset. The open source sample is small and heterogeneous.

Single instrument. The IPIP-50 is validated for humans. Whether items like “I often feel blue” map meaningfully to language model behavior is an open question. I also didn’t compute Cronbach’s alpha to verify that the trait scores are internally consistent when items are presented without conversational context.

The Code

Everything is open source and fully reproducible: github.com/Rise-AI-Consulting/llm-personality-survey

survey.pyruns the full survey across any available APIblog_analysis.pygenerates all charts and statistical testsprompt_sensitivity.pyruns the 4-prompt-variant experiment- All raw results are included as JSON files (120 runs across 24 models)

Add your API keys and run uv run python3 survey.py. The survey supports incremental runs, so you can add models as you get access.

I’d particularly like to see someone add Claude to the dataset.